Designing the Future: An AI-Powered Block Definition Diagram for Autonomous Vehicle Systems

Building a reliable autonomous vehicle system demands more than just coding—it requires a precise, structured understanding of how hardware, software, and real-time decision-making interact. The challenge lies in visualizing this complexity without drowning in technical jargon or losing the big-picture architecture. That’s where the Visual Paradigm AI Chatbot steps in: not just generating diagrams, but co-architecting with you through natural conversation.

From Idea to Architecture: A Collaborative Modeling Journey

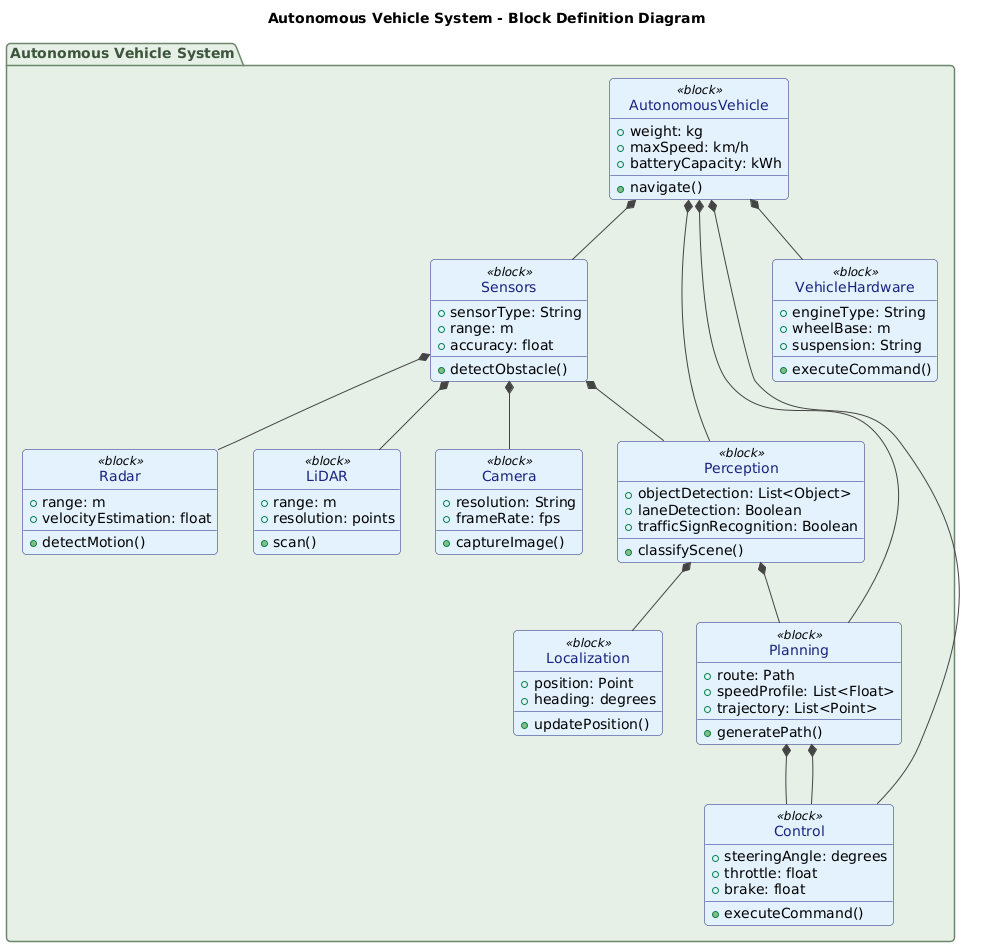

It began with a simple prompt: “Draw a Block Definition Diagram to explain the components of an autonomous vehicle system such as sensors, perception, planning, control, and vehicle hardware.” The AI Chatbot didn’t just render a static diagram—it interpreted the intent, mapped the system’s core blocks, and structured them using SysML’s Block Definition Diagram (BDD) standards.

After the initial generation, the conversation evolved. The user asked: “Explain this diagram.” Instead of a generic summary, the AI delivered a layered, professional breakdown—highlighting not just what each block does, but how they interconnect in a closed-loop system. This wasn’t a one-way output; it was a dialogue.

When the user requested deeper insights—such as clarifying the role of Localization or the flow from Perception to Planning—the AI responded with precision, reinforcing architectural logic and design rationale. For example, it clarified that localization feeds both perception and planning, ensuring that decisions are grounded in accurate positioning. These follow-ups weren’t just answers—they were AI-guided design consultations, refining the model’s fidelity and completeness.

Decoding the System: Logic Behind the Block Definition Diagram

The generated Block Definition Diagram captures the autonomous vehicle as a hierarchical system of interconnected blocks. Each block represents a structural component, and the relationships define how data and control flow through the system.

Core Blocks and Their Roles

- AutonomousVehicle: The root block, acting as the system container. It holds the overall properties (weight, max speed, battery) and owns all subsystems.

- Sensors: A composite block representing LiDAR, Radar, and Camera. Each sensor type is modeled as a sub-block, showing specialized capabilities like high-resolution scanning (LiDAR), motion detection (Radar), and visual recognition (Camera).

- Perception: Processes raw sensor data into actionable understanding—detecting objects, identifying lanes, recognizing traffic signs.

- Planning: Uses perception data to compute safe, efficient routes and speed profiles. It generates a trajectory that the control system can execute.

- Control: Translates the planned trajectory into real-time commands for steering, throttle, and braking.

- VehicleHardware: The physical layer—the engine, suspension, wheelbase—executing the commands from the control system.

- Localization: A critical supporting block that maintains the vehicle’s position and orientation in space, enabling accurate navigation.

Why BDD? The Strategic Choice

Block Definition Diagrams are ideal for this use case because they focus on system structure—not behavior. This makes BDD perfect for:

- Defining the high-level components and their relationships.

- Establishing a shared reference for engineers, architects, and stakeholders.

- Providing a foundation for further modeling (e.g., internal block diagrams, sequence diagrams).

By using BDD, the AI ensured the diagram emphasized modularity and dependency clarity, making it easier to scale, test, and maintain.

Conversational Intelligence in Action

The real power of the Visual Paradigm AI Chatbot lies in its ability to respond to natural language queries with architectural insight. When the user asked for an explanation, the AI didn’t just list blocks—it provided a narrative of how the system operates as a feedback loop:

- Sensors → Perception → Planning → Control → Vehicle Hardware → Feedback → Perception

This loop illustrates the continuous, adaptive nature of autonomous driving—where every decision is informed by real-time data.

Further, the AI proactively offered next steps: “Let me know if you’d like a version with color coding, a sequence diagram of how it works, or a C4 model of the same system!” This isn’t just diagram generation—it’s design partnership.

More Than Just BDD: A Full-Spectrum AI Modeling Platform

While this example focused on SysML’s Block Definition Diagram, the Visual Paradigm AI Chatbot is built to support a full spectrum of modeling standards. Whether you’re designing enterprise architecture with ArchiMate, modeling complex systems with SysML, visualizing software architecture with C4 Model, or mapping organizational structures with Org Charts, the AI adapts to your needs.

It handles:

- UML: Class, sequence, state, and activity diagrams.

- ArchiMate: Business, application, and technology layers.

- SysML: BDD, internal block diagrams, activity diagrams.

- C4 Model: Context, containers, components, and code.

- Visual Planning Tools: Mind maps, PERT charts, SWOT, PEST, and various data charts (pie, line, area).

This versatility makes Visual Paradigm not just a diagramming tool, but an AI-powered visual modeling platform that evolves with your project—from concept to deployment.

Conclusion: Designing Smarter, Together

The autonomous vehicle system is a marvel of engineering, but its success depends on a clear, accurate, and collaborative design process. With the Visual Paradigm AI Chatbot, you’re not just generating diagrams—you’re engaging in a dynamic, intelligent conversation that shapes the architecture from the ground up.

Whether you’re a systems architect, software engineer, or product designer, the platform empowers you to model complex systems with confidence, clarity, and speed.

Ready to build the next generation of intelligent systems? Start your conversation with the Visual Paradigm AI Chatbot and see how natural language can transform your design process.